Why Human Accountability Still Matters in the Age of AI

Leadership takeaway

- AI adoption rises when leaders keep accountability visible.

- The wrong message is replacement; the right message is supported judgment.

- Early wins should come from low-risk, practical use cases, not high-stakes autonomy.

- Trust-building and AI adoption should reinforce each other, not compete.

One of the most useful findings in the AI and Trust at Work white paper is also one of the easiest to miss: people are not rejecting AI outright. They are drawing boundaries around where trust belongs.

That is good news for leaders. It means adoption does not have to begin with a culture battle. But it also means leaders need to communicate carefully. When organizations present AI as a full substitute for judgment, oversight, or relationships, they step directly into the zone where trust is weakest. When they present AI as a tool that improves speed and quality while keeping people accountable, confidence rises.

If you want employees to trust AI more, here are four lessons from the white paper that deserve attention.

1. Make accountability visible, not implied.

The survey found that confidence in AI was much higher when a person remained accountable for the outcome. That matters because many organizations assume trust will rise automatically once a tool performs well enough. In reality, performance and accountability work together.

People want to know who is reviewing important outputs, who owns the final decision, and what happens when the system gets something wrong. If those answers are vague, trust remains fragile. If those answers are clear, adoption becomes much easier.

2. Start with work that is helpful, not work that is emotionally loaded.

The strongest AI use cases in the survey were practical ones: summarization, drafting, research, coding support, and repetitive work. Sensitive or personal use was much lower overall, even though a smaller subgroup had clearly moved in that direction.

That tells leaders something important about sequencing. Adoption should begin where AI is easiest to evaluate and easiest to correct. Let people see the value in routine work before asking them to trust AI in higher-stakes or emotionally complex settings.

3. Do not confuse efficiency with trust.

Teams may use AI every day and still reserve trust for human beings. The white paper showed moderate trust in daily use, but much lower willingness to treat AI like a trusted colleague.

This distinction protects leaders from a common mistake. High usage does not automatically mean high confidence. Sometimes it simply means the tool is fast, convenient, or unavoidable. If leaders want to understand whether adoption is healthy, they need to measure confidence, comfort, and perceived accountability, not just frequency of use.

4. Reinforce the value of human relationships as adoption grows.

Perhaps the most reassuring finding in the white paper is that stronger AI use did not correspond with lower belief in the importance of human relationships. Respondents still overwhelmingly believed that human relationships remain essential for trust at work.

Leaders should build on that instinct rather than fight it. The best AI strategies do not pit efficiency against humanity. They free people from lower-value cognitive labor so they can invest more attention in coaching, judgment, communication, and difficult conversations.

There is also a broader cultural lesson here. Trust rarely disappears in one dramatic moment. More often, it weakens when people feel decisions are becoming less explainable, less accountable, or less human. AI can intensify that fear if leaders are careless. But it can also reduce friction and improve performance when leaders make the rules, the ownership, and the boundaries unmistakably clear.

In other words, the question is not whether AI can be trusted in the abstract. The better question is this: under what conditions are people willing to trust it more? This white paper offers a strong answer. People trust AI more when it helps with real work, when a person still owns the outcome, and when its use does not threaten the human relationships that make work function in the first place.

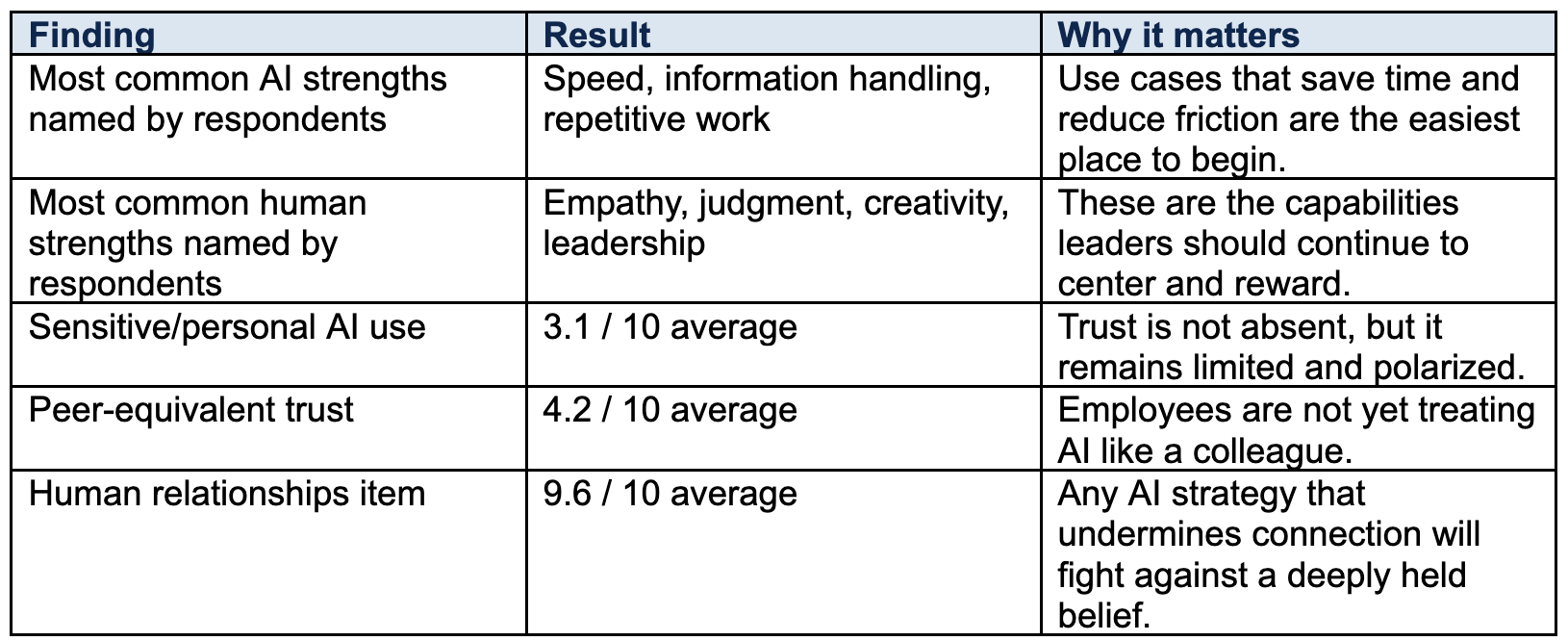

Key data points

For leaders, the path forward is straightforward. Introduce AI where it clearly helps. Keep review and ownership visible. Measure trust directly. And keep investing in the human side of work, because the human side is still where trust lives.

For stronger AI adoption, don’t just automate

the workflow. Build trust in the people using it.

Recent Posts

![SYNTHESIS [2026] in light blue text.](https://lirp.cdn-website.com/f969fb45/dms3rep/multi/opt/TB_Horizontal_White-b3af8ef2-1920w.png)