What Employees Really Trust AI to Do at Work

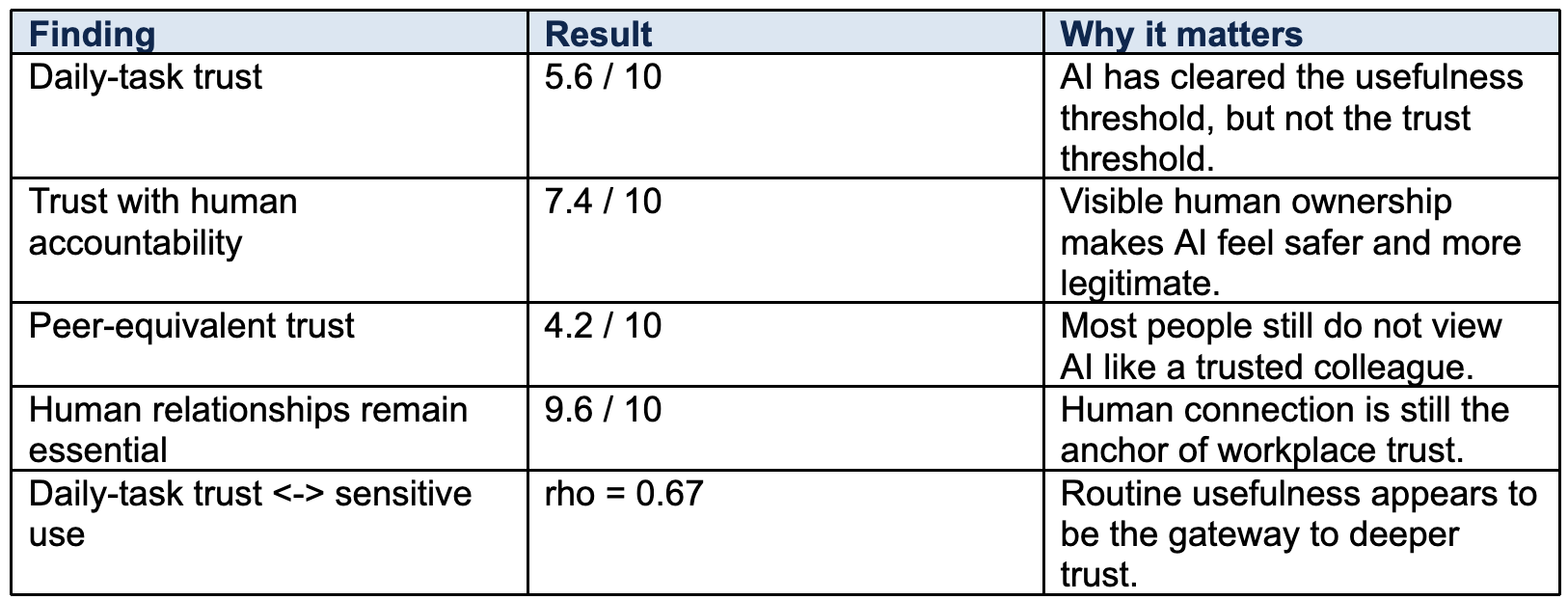

At a glance

- People are increasingly willing to use AI for speed, structure, and routine work.

- They are much less willing to trust AI the way they trust a human colleague.

- Trust rises sharply when a person remains accountable for the outcome.

- Higher AI use did not make respondents value human relationships any less.

Organizations everywhere are trying to answer the same question: How much trust will people actually place in AI at work?

A March 2026 survey summarized in the AI and Trust at Work white paper suggests a clear answer. People are open to AI as a useful helper. They are not yet ready to treat it as a trusted peer. That distinction matters because it tells leaders where adoption is most likely to succeed and where it is most likely to stall.

The strongest pattern in the data is simple: operational usefulness is moving faster than relational trust. Respondents gave AI a moderate score for day-to-day task support, but a much lower score when asked whether AI-generated insights were as trustworthy as those of a human colleague. In other words, many people are comfortable using AI to move faster. Far fewer are comfortable granting it the same credibility they would give a person.

That gap is not a small technical detail. It is the heart of the trust question.

1. AI is earning a role in the workflow, not in the relationship.

Across the survey, daily-task trust averaged 5.6 out of 10. Trust that AI insights are as trustworthy as a human colleague averaged just 4.2. Only one respondent rated that peer-equivalence question at 8 or higher.

That tells us people are finding value in AI, but they are still keeping it in a bounded role. It can help draft, summarize, research, organize, and analyze. It has not replaced the need for human judgment, credibility, or lived context.

2. Human accountability is the biggest trust amplifier in the data.

When respondents considered AI use in situations where a person remains accountable, the average score rose to 7.4 out of 10. This was one of the strongest signals in the entire white paper.

The implication is practical. If leaders want healthy adoption, they should not lead with autonomy. They should lead with visible ownership, review, and decision rights. People trust AI more when they know a human being is still responsible for what happens next.

3. Human relationships remain the foundation.

The strongest item in the survey was not about AI at all. It was the statement that human relationships remain essential for trust at work, which averaged 9.6 out of 10.

Just as important, this belief did not weaken among people who were more comfortable using AI. The data found essentially no negative relationship between stronger AI use and belief in the importance of human relationships. That is a crucial reminder for leaders who worry that AI adoption and human trust are somehow on a collision course. In this sample, they were not.

4. Trust appears to deepen in stages.

The most interesting correlations in the white paper point to a trust ladder. People who trust AI for daily tasks are more likely to trust it in more personal or reflective contexts. The strongest relationship in the dataset connected daily-task trust and sensitive or personal AI use. So routine usefulness appears to be the gateway to trusting AI with more sensitive material.

A second strong relationship connected daily-task trust and peer-equivalent trust. The message is not that everyone is moving toward deep trust. It is that routine usefulness appears to be the entry point. When AI proves itself in lower-risk work, some people begin extending that trust further.

5. The role split is remarkably consistent.

The qualitative responses reinforce the quantitative story. Respondents repeatedly described AI as strongest in speed, summarization, drafting, coding, and repetitive work. They described humans as strongest in empathy, judgment, creativity, leadership, and morally consequential decisions.

This is not a workforce asking to outsource trust. It is a workforce asking for help with speed and structure while preserving human authority in relational and consequential work.

Key data points

What should leaders do with this? Start by framing AI correctly. Position it as a co-pilot, not a peer replacement. Make human accountability visible. Roll out low-risk use cases first. And do not make the mistake of treating efficiency gains as a substitute for trust-building between people.

The organizations that handle AI well will not be the ones that push the hardest for full automation. They will be the ones that understand the difference between assistance and authority, then design their systems around that reality.

Don’t guess why people hesitate with AI. Measure trust directly.

Recent Posts

![SYNTHESIS [2026] in light blue text.](https://lirp.cdn-website.com/f969fb45/dms3rep/multi/opt/TB_Horizontal_White-b3af8ef2-1920w.png)